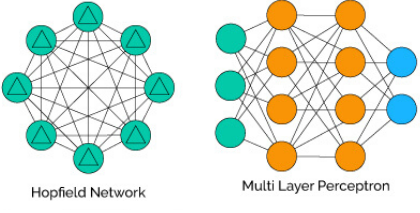

Hopfield vs MLP¶

- Hopfield

- Non supervised

- Information retrieval

- Learning and retrieval

- Non supervised

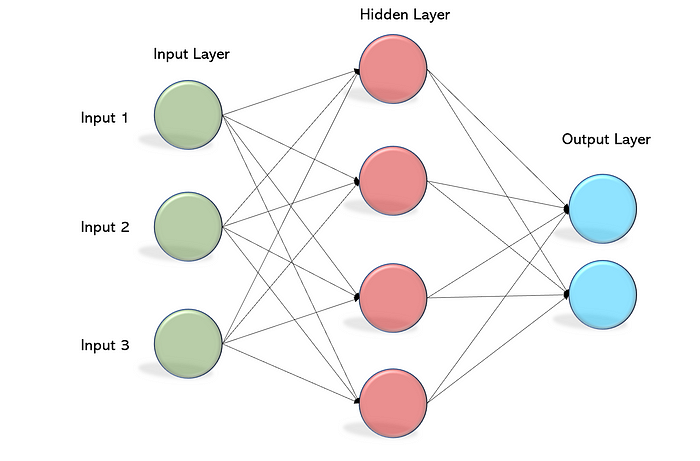

- MLP

- Supervised

- Classification

- Regression

- Training and test

- Supervised

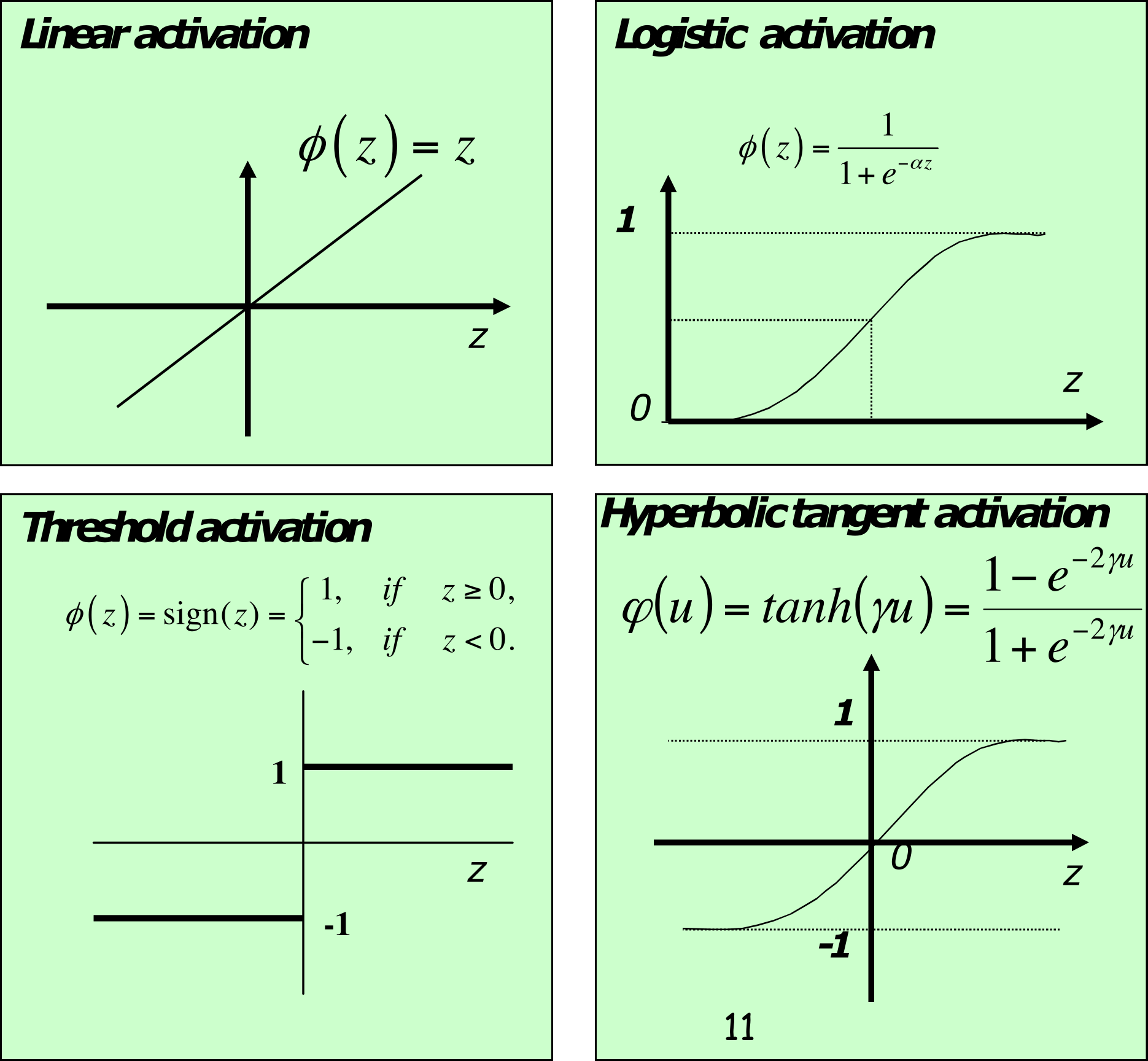

Popular activation functions¶

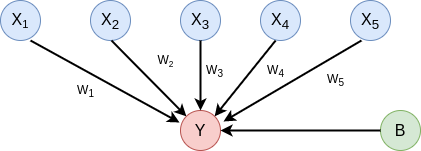

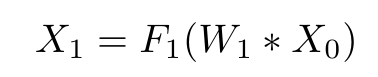

Feed forward the information¶

- output of first layer

- nth layer output

- measure error of the output with the target

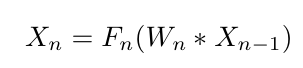

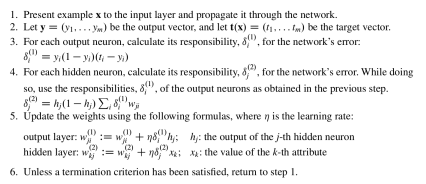

The learning algorithm (backpropagation)¶

Backpropagation pseudocode¶

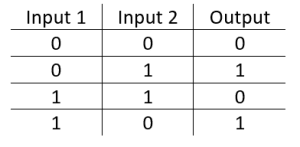

In [5]:

import numpy as np

import matplotlib.pyplot as plt

from sklearn.metrics import mean_squared_error

# Parameters, neurons: input, hidden, output

N_i = 2; N_h = 4; N_o = 1

# XOR input

r_i = np.matrix('0 1 0 1; 0 0 1 1')

# XOR output

r_d = np.matrix('0 1 1 0')

r_i.T, r_d.T

Out[5]:

(matrix([[0, 0],

[1, 0],

[0, 1],

[1, 1]]),

matrix([[0],

[1],

[1],

[0]]))

# Initialize randomly the weights

# Hidden layer

w_h = np.random.rand(N_h,N_i) - 0.5

# Output layer

w_o=np.random.rand(N_o,N_h) - 0.5

training_steps = 10000

mse = []

for i in range(training_steps):

# Select training pattern randomly

i = np.floor(4*np.random.rand()).astype('int')

# Feed-forward the input to hidden layer

r_h = 1 / (1 + np.exp(-w_h*r_i[:,i]))

# Feed-forward the input to the output layer

r_o = 1 / (1 + np.exp(-w_o*r_h))

# Calculate the network error

d_o = (r_o*(1-r_o)) * (r_d[:,i] - r_o)

# Calculate the responsability of the hidden network in the error

d_h = np.multiply(np.multiply(r_h, (1-r_h)), (w_o.T*d_o))

# Update weights

w_o = w_o + 0.7*(r_h*d_o.T).T

w_h = w_h + 0.7*(r_i[:,i]*d_h.T).T

# Test all patterns

r_o_test = 1 / (1 + np.exp(-w_o*(1/(1+np.exp(-w_h*r_i)))))

mse += [mean_squared_error(r_d, r_o_test)]

plt.plot(mse)

Sources and resources¶

- https://aulasvirtuales.udla.edu.ec/udlapresencial/pluginfile.php/1622341/mod_resource/content/8/IA13.pdf

- https://anaconda.org/marsgr6/mlp/notebook

- https://paginas.fe.up.pt/~ec/files_1112/week_10_NN_and_SVM_JMM.pdf

- https://heartbeat.fritz.ai/backpropagation-broken-down-4c52bdcb4bb

- https://towardsdatascience.com/an-introduction-to-gradient-descent-and-backpropagation-81648bdb19b2

- https://codesachin.wordpress.com/2015/12/06/backpropagation-for-dummies/

- https://mattmazur.com/2015/03/17/a-step-by-step-backpropagation-example/